“Excuse me, miss. Do I understand you were looking for a particular AF [Artificial Friend]? One you’d seen here before?”

“Yes, ma’am. You had her in your window a while back. She was really cute, and really smart. Looked almost French? Short hair, quite dark, and all her clothes were like dark too and she had the kindest eyes and she was so smart.”

— Kazuo Ishiguro, Klara and the Sun (2021)

After China closed its borders to foreign nationals due to the COVID-19 pandemic, I was not able to travel to Shanghai to conduct ethnographic research for my PhD, as I had originally intended. My project, which aimed to explore the racialized experiences of white entrepreneurs from Europe and the US through an investigation of foreign businesses and start-ups, had to be radically reconfigured. Like many anthropologists, I turned to online research, combining archival data collection with media analysis and doing online interviews with participants through social media platforms. During two years of digital ethnographic research, I structured my fieldwork as an open-ended process in which I collaborated with my interlocutors to design and shape my project (Pink et al. 2016). In an effort to apprehend their racialized performances as skilled migrants in China’s business sector, I asked them careful questions about their migration trajectories and shared articles with them about China’s business sector to prompt our conversations. In the process, I came across what was for me a new area of inquiry: the construction of whiteness within China’s artificial intelligence (AI) industry.

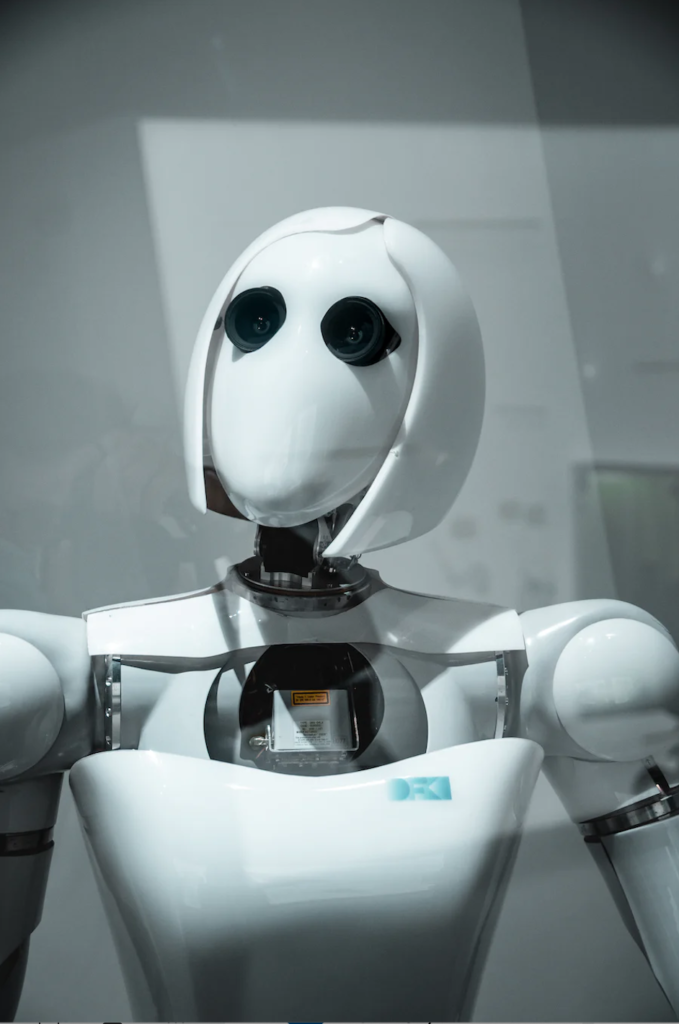

My entry into the racialized world of AI in China took place when one of my interlocutors, Liam (pseudonym) from the UK, sent me a photo from a 2017 event that took place in the city of Shenzhen (the “Silicon Valley” of China). The photo showed an AI robot sketching pictures while wearing a black beret, a fake moustache, and a pair of glasses. Under the photo, people on China’s social media platforms (mainly WeChat and Weibo) commented that it looked like a French painter. Liam had included a short message to me in English, in response to our conversation about what it was like for him to be a white migrant in China: “white? Who is white? Are you looking for someone particular? This is maybe (sic) your future skill[ed] research participant!” Liam characterized the robot as “white” not only because of the shiny white material from which its “skin” was made, but also because he was perceiving it through a lens of racialized stereotypes that conflated whiteness and certain forms of skilled labor. I stared at his message for quite some time while considering the implication of AI technology in my research. I thought back to Kazuo Ishiguro’s dystopian novel, Klara and the Sun. The main narrator of the story is a doll-like, solar-powered, AI robot named Klara who provides companionship to a privileged teenage girl. Throughout the book, Klara is racialized and gendered as a cute woman with neat short hair who “looks” French. Encountering Klara, the reader cannot but wonder what makes someone human. I recalled feeling overwhelmed by thoughts of human-machine relationships, and this in turn made me interrogate my own ideas and preconceived notions about AI-supported futures. I wanted to learn more. I wondered: Could I gain access to how these futures were being imagined and actualized in China’s AI industry right now? And further, what role did race play in this industry, and how was AI technology itself racialized?

AI robots as gendered and racialized. Photo credit: Unsplash.

The rapid rise of AI technology in China has generated new theoretical and practical questions about the relationship between people and machines. By looking beyond algorithms and machine learning systems, I turned my focus to China’s efforts to become the global leader in AI technology. I started to collect social media posts, updates, and news articles detailing the growth of the country’s AI industry, organizing into categories the main activities for which AI systems are used (and are slotted for future use) throughout China. I also reached out to small start-up companies working on and with AI technology. I asked my interlocutors questions about China’s technological capacity to construct and develop AI tech systems and robots with distinctive features, such as human characteristics. I also accepted invitations to participate in online events in China related to AI and AI development systems. Thus, I created a mosaic of different methods to observe this world that was new to me.

It would be wrong to assume that my data collection process afforded me open access to this field of study. What it did do, however, was open new horizons for further research on the ethics of using technological power for surveillance purposes, as China mainly does. Crawford (2021) argues that “AI is neither artificial nor intelligent. Rather, artificial intelligence is both embodied and material, made from natural resources, fuel, human labour, infrastructures, logistics, histories, and classifications.” In my research, I found that AI applications are used in nearly every human domain and are portrayed as offering distinct advantages in both industrial development and in the realm of surveillance. A key question that emerged for me in all of this, however, was why these ground machineries were constructed as “tech skill talents” with human characteristics, like the robot in Shenzhen?

Shifting my focus to contemporary configurations of whiteness in Chinese tech industries, I tried to first unpack the meanings of “skill” and “skilled work” among white migrants in China. According to many scholars, geographic mobility can be a factor in the definition of skills (Liu-Farrer, Yeoh, and Baas, 2020). According to Farrer’s (2019) research on foreign migrants in Shanghai, race and nationality are indispensable in defining the qualities of skills. Studies on transnational corporate migrants in Asia frequently focus on white westerners and view “skills” through a racialized prism that privileges whiteness (Camenish and Suter 2019; Farrer 2019). Writing about whiteness in China more broadly, Lan (2021:3557) states that “the reconfiguration of whiteness in China is mediated not only by skin color but by diverse factors such as citizenship, class, gender, age, field of employment, English and Chinese language proficiency, and professional qualifications.” Scholars have also noted the territorially bounded nature of whiteness (Lan 2011; Lundström 2014). Because white skin is often perceived as a marker of foreign-trained skill in China’s business and technology sectors, white workers experience privilege. Nevertheless, given that racial stereotypes frequently depend upon homogenization and othering, the privileges they may encounter due to whiteness are somewhat contingent and may swiftly transform into precariousness. How, then, are we to understand the construction of whiteness within the AI industry—and the racialization of AI applications themselves—within this larger context of racialized labour and white privilege?

My ongoing research within the AI sector has led me to perceive a new turn in China’s labor market in which the government itself is constructing AI robots and tech systems that are gendered and racialized, oftentimes marked as distinctly foreign by their appearance and their embodied characteristics. The applications are designed to serve as skill tech systems in a wide range business domains. The image of the robot who looked like a French painter, covered as it was with a white plastic humanoid shell, not only looked more human than machine, but also looked distinctly white. It is worth noting that while physical characteristics like the robot’s white “skin” are the primary markers delineating a racial frame, AI also embodies less tangible (invisible or only semi-visible) “cultural characteristics” that are themselves racialized. In my research, I thus turned to consider the place of whiteness within this racialized framework that dominates China’s AI industry across different business fields: AI digital humans who are able to have multi-turn conversations with users in Chinese and English; foreign and local fashion brands and e-commerce platforms, which present virtual designs on virtual white-presenting humans; virtual idols with fully AI-developed characteristics; and Chinese firms that teach English to students by using AI algorithms to curate their courses. My intention is thus to look across China’s AI sector as an anthropologist to see how whiteness is constructed within—and itself constructs—the development of AI in China.

Many of my white interlocutors who worked within China’s business sector discussed the privileges they encounter during their business meeting with Chinese clients due to their skin color, and mentioned that their degrees from prestigious western universities give them status in China. Many of them also mentioned the importance of branding their businesses in a way that highlights their nationality, their training, their distinct skill sets, and their English language proficiency. Still, most demonstrated limited awareness of the reproduction of whiteness in China’s tech industry, even when they recognized the privileges afforded to them in their daily lives and as actors in China’s thriving market economy. The ethnographic challenges I faced at the outset of my fieldwork could have caused me to overlook the work of whiteness in AI. Instead, I turned my focus to the racialization of robots and AI systems, demonstrating how these might inscribe and further project a “white utopia imagination” (Cave and Dihal 2020.)

My ongoing involvement with this research thus uncovered a new topic for investigation in China’s machine production: a racially constructed technology which produces robots and AI systems that in turn reinforce the global hegemony of whiteness.

Acknowledgements

My PhD research is a part of the ERC funded anthropological research project China-White initiated at the University of Amsterdam. Warm thanks to Katie Kilroy-Marac for editorial assistance that which was instrumental in shaping this reflection of my ethnographic research.

References

Camenish, Aldina and Suter Brigitte. 2019. “European Migrant Professionals in Chinese Global Cities: A Diversified Labour Market Integration.” International Migration 57 (3): 208-221.

Cave, Stephen and Dihal, Kanta. 2020. “The Whiteness of AI.” Philosophy and Technology 33:685-703.

Crawford, Kate. 2021. Atlas of AI. Power, Politics, and the Planetary Costs of Artificial Intelligence. New Haven and London: Yale University Press.

Farrer, James. 2019. International Migrants in China’s Global Cities: The New Shanghailanders. London: Routledge.

Lan, Shanshan. 2021. “The foreign bully, the guest and the low-income knowledge worker: performing multiple versions of whiteness in China.” Journal of Ethnic and Migration Studies 48(15):3544-3560.

Lan, Pei-Cia. 2011. “White Privilege, Language Capital and Cultural Ghettoization: Western High- Skilled Migrants in Taiwan.” Journal of Ethnic and Migration Studies 37 (10): 1669–1693.

Liu-Farrer, Gracia, Brenda S. Yeoh, and Michiel Baas. 2020. “Social Construction of Skill: An Analytical Approach Toward the Question of Skill in Cross-Border Labour Mobilities.” Journal of Ethnic and Migration Studies 47 (10):1–15.

Pink, Sarah, Horst, Heather, Postill, John, Hjorth, Larissa, Lewis, Tania and Tacchi, Jo. 2016. Digital Ethnography. Principles and Practice. New York: Sage.

Christina Kefala is a PhD candidate at the University of Amsterdam (Department of Anthropology). Her project, titled “The Reconfiguration of Whiteness in China: Privileges, precariousness, and racialized performances,” is part of a European Research Council (ERC)-funded anthropological research project called China-White. The focus of her study is on young foreign entrepreneurs and businesses in China, based on four thematic topics: the impact of covid-19 on foreign entrepreneurs, the gendered and racialized performances of foreign young women in online businesses, foreign entrepreneurs in China’s Silicon Valley, and finally, independent/creative businesses in Hong Kong. Currently she is a visiting PhD researcher at the University of California, Berkeley.

Cite As: Kefala, Christina. 2022. “An Ethnographic Encounter with “White” AI”, American Ethnologist website, 25 November 2022 [https://americanethnologist.org/online-content/essays/an-ethnographic-…christina-kefala/]